CLIs are eating MCPs. The industry is converging on the very same idea. MCPs for all their merit can be token hungry, slow, and unreliable for complex tool chaining. However, coding agents have become incredibly good at working with CLIs, and in fact they are far more comfortable working with CLI tools than MCP.

With Composio's Universal CLI, your coding agents can talk to over 1000+ SaaS applications. With Ollama, agents can run a custom prompt with llama2, get model info for all installed models, generate a text completion using mistral, and more — all without worrying about authentication.

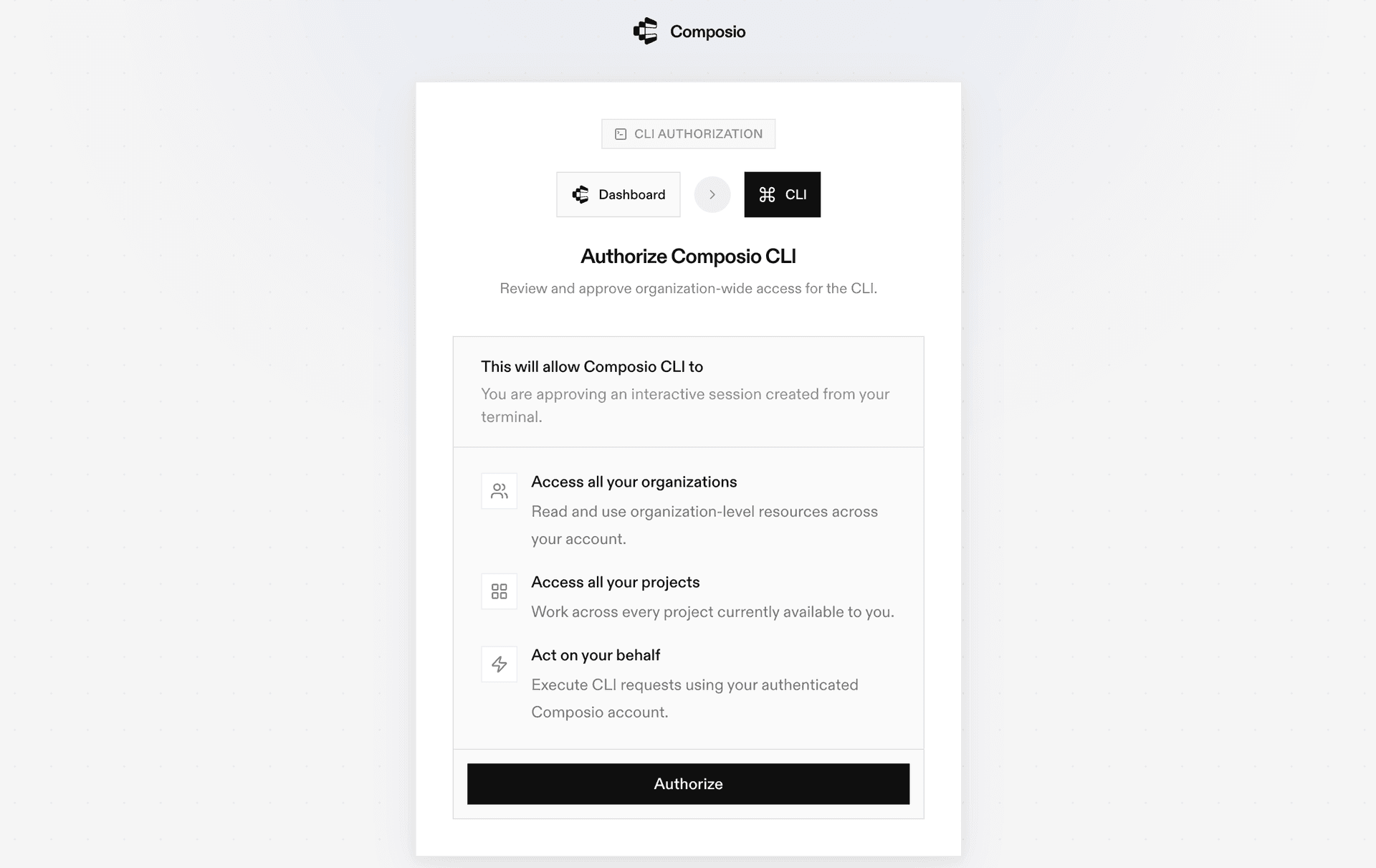

This guide walks you through Composio Universal CLI and explains how you can connect it with coding agents like Claude Code, Codex, OpenCode, etc, for end-to-end Ollama automation.