Codex is one of the most popular coding harnesses out there. And MCP makes the experience even better. With Firecrawl MCP integration, you can draft, triage, summarise emails, and much more, all without leaving the terminal or the app, whichever you prefer.

Table of Contents

Connect Firecrawl without Auth hassles

We manage OAuth, API Key, token refresh, and scopes, you just build.

Try for FreeIntroduction

Also integrate Firecrawl with

Why use Composio?

Apart from a managed and hosted MCP server, you will get:

- CodeAct: A dedicated workbench that allows GPT to write its code to handle complex tool chaining. Reduces to-and-fro with LLMs for frequent tool calling.

- Large tool responses: Handle them to minimise context rot.

- Dynamic just-in-time access to 20,000 tools across 1000+ other Apps for cross-app workflows. It loads the tools you need, so GPTs aren't overwhelmed by tools you don't need.

How to install Firecrawl MCP in Codex

Run the setup command

Run this command in your terminal to add the Composio MCP server to Codex.

It will initiate the authentication in a browser window, authorize Codex to access your Composio account.

(Optional) Authenticate with OAuth

To authenticate manually, run the login command to open a browser window and authorize Codex to access your Composio account.

Verify the connection

Run codex mcp list to confirm Composio appears as a registered MCP server.

Codex App

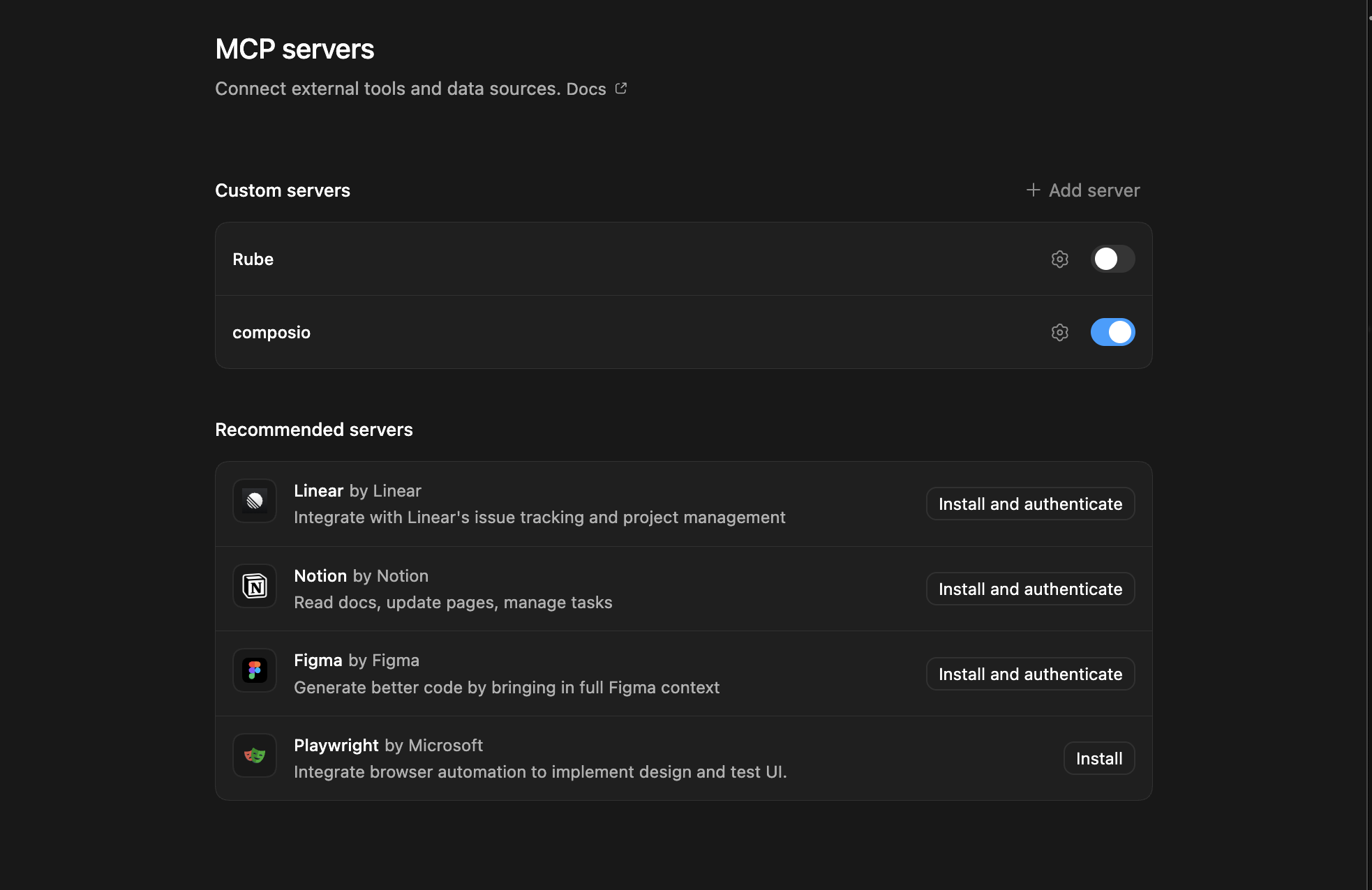

Codex App follows the same approach as VS Code.

- Click ⚙️ on the bottom left → MCP Servers → + Add servers → Streamable HTTP:

- Fill the header and Key fields with

{ "x-consumer-api-key" = "ck_*******" }. - The Key is the Composio API key, that you can find on dashboard.composio.dev

- Click on Authenticate and authorize Codex to your Composio account and you're all set.

- Restart and verify if it's there in

.codex/config.toml

What is the Firecrawl MCP server, and what's possible with it?

The Firecrawl MCP server is an implementation of the Model Context Protocol that connects your AI agent and assistants like Claude, Cursor, etc directly to your Firecrawl account. It provides structured and secure access to automated web crawling, scraping, and data extraction, so your agent can perform actions like indexing sites, extracting structured content, mapping URLs, and searching the web on your behalf.

- Automated web crawling and indexing: Let your agent launch and manage web crawl jobs to gather content or index entire websites efficiently.

- Structured data extraction: Instruct your agent to extract targeted data from web pages using custom prompts or schemas, turning unstructured sites into actionable information.

- URL mapping and discovery: Have the agent explore and map all URLs within a website, including options for subdomain inclusion, sitemap processing, or search-based discovery.

- On-demand scraping and content retrieval: Enable your agent to scrape specific URLs, retrieve page content, and even extract structured JSON using LLM-powered methods.

- Integrated web search and data collection: Task your agent with running web searches, scraping top result pages, and returning relevant details—all in one workflow.

Supported Tools & Triggers

Conclusion

You've successfully integrated Firecrawl with Codex using Composio's MCP server. Now you can interact with Firecrawl directly from your terminal, VS Code, or the Codex App using natural language commands.

Key benefits of this setup:

- Seamless integration across CLI, VS Code, and standalone app

- Natural language commands for Firecrawl operations

- Managed authentication through Composio

- Access to 20,000+ tools across 1000+ apps for cross-app workflows

- CodeAct workbench for complex tool chaining

Next steps:

- Try asking Codex to perform various Firecrawl operations

- Explore cross-app workflows by connecting more toolkits

- Build automation scripts that leverage Codex's AI capabilities